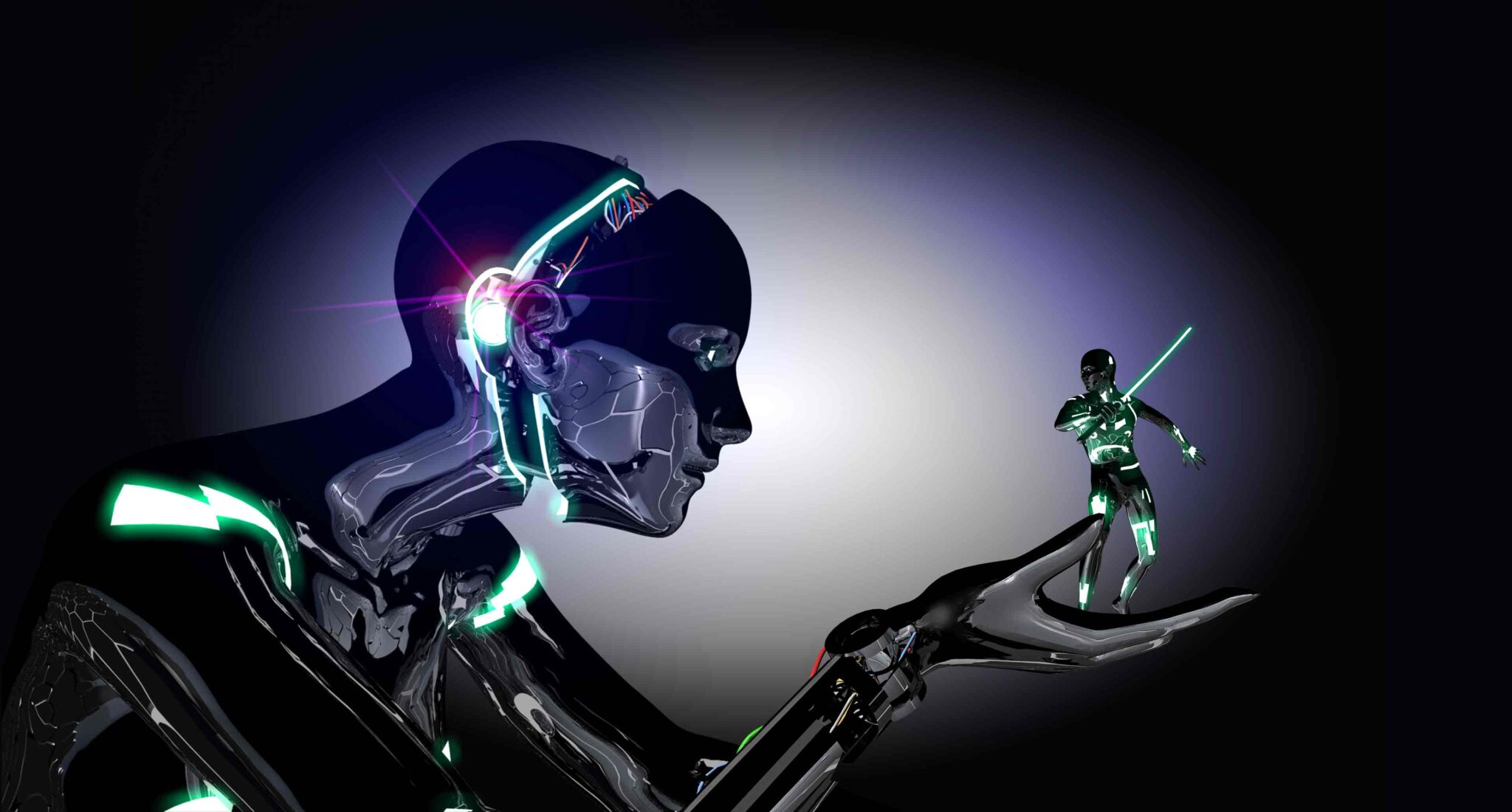

Every click, scroll, and pause you make is teaching a machine what stories to serve you—and which ones to bury. The result? Your version of reality isn’t the truth. It’s the feed.

For thousands of years, storytelling belonged to humans. Campfires. Libraries. Stages. Then came television, cinema, and the great publishing houses. But today, your story isn’t just told by you—it’s curated, contorted, and commodified by algorithms you’ll never meet.

Every click, scroll, and pause you make is teaching a machine what stories to serve you—and which ones to bury. The result? Your version of reality isn’t the truth. It’s the feed.

Forget literary gatekeepers. The platforms we depend on for reach—Instagram, TikTok, YouTube, LinkedIn—don’t simply deliver stories, they actively shape them. Your opening hook, your pacing, even the “hero” of your story is decided not by your artistic instinct, but by machine-led probability models.

Insiders call it “algorithmic plot steering”. If the system predicts higher engagement from outrage, your story gets an antagonist. If it sees curiosity spikes at the 14-second mark, you’ll find your video cut there—whether you like it or not.

Most creators obsess over likes, shares, and impressions. But in certain closed-door analytics dashboards, shadow metrics rule the game:

Only a small handful of agencies—and a few big-name brands—have full access to these dashboards. Everyone else is telling stories blindfolded.

In elite content studios from New York to Seoul, creative teams now build stories backwards—starting not from inspiration, but from data maps showing where the audience’s dopamine peaks occur.

The moral arc of the narrative? Secondary.

The truth of the message? Negotiable.

The engagement spike? Non-negotiable.

One award-winning creative director—who shall remain nameless—admitted over dinner at a private festival lounge in Cannes:

“We’re not storytellers anymore. We’re behavioural architects with cameras.”

At a high-profile media summit in Singapore, an unguarded panel conversation between a former tech exec and a well-known filmmaker revealed something chilling: certain platforms ghost-throttle creators who deviate from the “optimal emotional range” their audience data suggests.

The implication? The algorithm knows what you’re “allowed” to feel before your audience even sees your work. And it will quietly starve your reach if you try to go off-script.

Rumour has it that at least two major publishing imprints have begun rejecting manuscripts not because of quality, but because early AI-driven tests predict low “algorithmic compatibility scores” for the author’s persona.

If you’re not actively designing your story for, and against, the algorithm, you’re not telling your story. You’re telling theirs.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

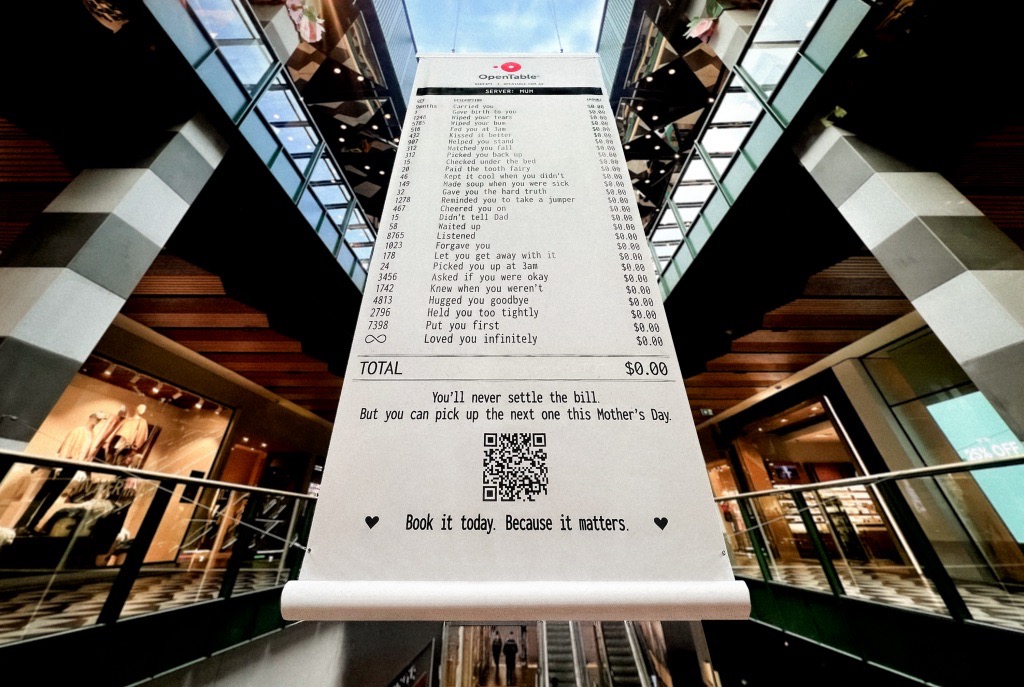

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.