Artificial intelligence is often presented as a triumph of engineering and computational scale, yet its true foundation is neither autonomous nor purely technical. It is built continuously, incrementally, and globally through human interaction that is largely unrecognised and uncompensated. Every click, correction, upload, and behavioural signal contributes to the training and refinement of AI systems, forming a vast, distributed layer of labour embedded within everyday digital life. This labour is not formally acknowledged, yet it generates immense value for platforms that aggregate, structure, and monetise it. The result is a quiet inversion of traditional economic models: users are no longer merely consumers, but active contributors to production—without ownership, compensation, or control. This editorial examines how data functions as labour, how platforms extract value from participation, and why the economic architecture of artificial intelligence raises fundamental questions about fairness, ownership, and the future of human agency in digital systems.

The concept of labour within the digital economy requires redefinition, as traditional frameworks that associate labour with formal employment and direct compensation fail to account for the ways in which value is generated through participation in digital systems. Users interacting with platforms are not typically understood as workers, yet their actions produce data that is essential for the development and operation of AI technologies. This data is not incidental but foundational, as it provides the patterns, signals, and contextual information that allow models to learn, adapt, and improve over time.

Every interaction within a digital environment contributes to this process, whether through explicit actions such as creating content and providing feedback or through implicit behaviours such as viewing, clicking, and navigating. Platforms such as YouTube exemplify this dynamic, as user engagement generates detailed datasets that capture not only preferences but patterns of attention, timing, and response. These datasets are used to refine recommendation systems, optimise content delivery, and inform broader models of user behaviour that extend beyond the platform itself.

The transformation of behaviour into data introduces a layer of value extraction that operates continuously and at scale, converting everyday actions into inputs for systems that generate economic returns. This process is not transparent, as the mechanisms through which data is collected, structured, and utilised are often obscured by the complexity of the platforms that manage them. Users engage with interfaces designed for interaction, not for revealing the underlying processes that translate their activity into value.

Moderation systems provide a more visible example of how human input is integrated into AI development, as users actively participate in identifying and categorising content that violates platform standards. These actions contribute to the training of automated systems that can perform similar tasks at scale, effectively encoding human judgement into algorithmic processes. While moderation is often framed as a community function, it also represents a form of distributed labour that supports the operation and improvement of platform infrastructure.

The economic structure that emerges from this model is characterised by a significant asymmetry between contribution and compensation, as the value generated by user activity is captured primarily by the platforms that aggregate and process the data. These platforms monetise user engagement through advertising, subscriptions, and the licensing of data-driven technologies, creating revenue streams that are directly linked to the volume and quality of user interactions. The users themselves receive access to services, but not a proportional share of the value derived from their contributions.

This asymmetry is reinforced by the collective nature of data, which complicates the attribution of value to individual contributions and thereby limits the feasibility of traditional compensation models. Data generated by multiple users is aggregated into datasets that derive their utility from scale and diversity, making it difficult to isolate the impact of any single input. This collective characteristic allows platforms to treat data as a shared resource while retaining control over its monetisation.

The question of whether data constitutes labour is therefore not merely theoretical but central to understanding the economic dynamics of AI, as it challenges existing assumptions about how value is created and distributed within digital systems. If user activity is recognised as a form of labour, then the current model represents a significant imbalance in which contributors are not compensated for the value they generate. If it is not recognised as labour, then the extraction of value from participation remains embedded within a framework that does not account for its economic significance.

Emerging discussions around data ownership and compensation reflect growing awareness of this imbalance, as proposals for data dividends, cooperative platform models, and user-controlled data ecosystems seek to address the gap between contribution and reward. These proposals face practical challenges related to valuation, implementation, and governance, yet they indicate a shift in how the role of users within digital systems is being conceptualised. The recognition of data as an asset that can be owned, controlled, and monetised introduces new possibilities for restructuring the relationship between platforms and participants.

The ethical implications of this model extend beyond economic considerations, as the reliance on human-generated data raises questions about representation, bias, and the distribution of influence within AI systems. The data used to train these systems reflects the behaviours, preferences, and perspectives of the populations that generate it, meaning that the outputs of AI are shaped by the characteristics of the underlying dataset. Ensuring that these outputs are fair, accurate, and representative requires an understanding of how data is collected and whose contributions are included.

The integration of AI into economic and social systems further amplifies these considerations, as the decisions and recommendations produced by these technologies influence outcomes across a range of domains, from employment and finance to healthcare and governance. The hidden labour that underpins AI is therefore not only a matter of economic fairness but of systemic impact, as it shapes the capabilities and limitations of technologies that are increasingly central to modern life.

The hidden labour behind AI matters because it reveals the structural foundations of a technology that is often perceived as autonomous, highlighting the extent to which human activity remains central to its development and operation. Recognising this foundation is essential for understanding how value is created within digital systems and for addressing the imbalances that arise when contributions are not matched by compensation.

For individuals, this awareness provides a basis for more informed engagement with digital platforms, encouraging consideration of how participation contributes to broader systems of value creation. For policymakers, it underscores the need to develop frameworks that account for the role of data as both an economic asset and a form of labour, balancing innovation with fairness. For companies, it presents an opportunity to build trust and differentiate themselves by adopting models that are more transparent and equitable in their treatment of user contributions.

The future of AI will be shaped not only by advances in technology but by decisions about how the value it generates is distributed, and whether the systems that underpin it evolve to reflect the contributions of those who make it possible. Understanding the hidden labour behind AI is therefore not simply an analytical exercise but a necessary step in designing systems that align with broader principles of equity and sustainability.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

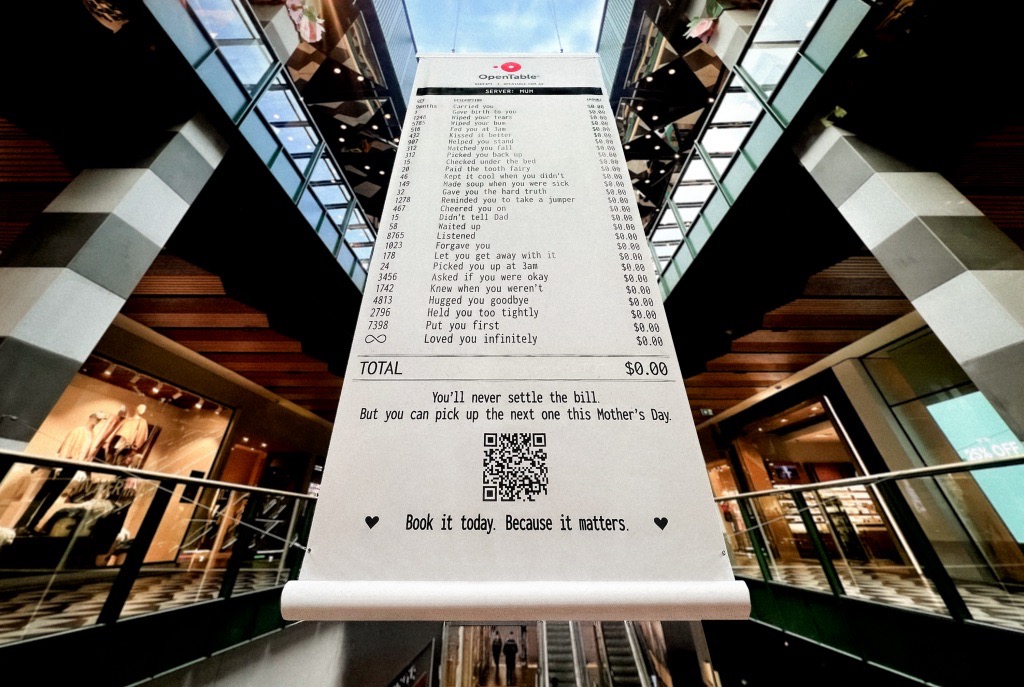

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.