When forty-three laboratory monkeys escaped from a U.S. biomedical research facility this year, headlines treated it as absurd news. It wasn’t. It was a parable — of a civilisation that still mistakes cruelty for curiosity and control for knowledge.

In April 2025, forty-three long-tailed macaques broke free from a high-security biomedical facility in Georgia. The incident, confirmed by NBC News, prompted a four-day search involving police, wildlife officers, and laboratory contractors.

The facility’s operator, Inotiv Inc., had been under federal investigation for alleged violations of the Animal Welfare Act. Though most monkeys were recaptured, several remain unaccounted for.

The story briefly trended online — framed with emojis and disbelief — before vanishing from the news cycle. But beneath the spectacle lies a systemic question: how ethical is the science that needs cages to call itself progress?

Animal testing remains embedded in the infrastructure of biomedical research.

Every drug, vaccine, and medical device passes through bodies that never consented. The US Department of Agriculture’s 2024 Report recorded over 700,000 animals used in experiments — excluding rats, mice, and fish, which are uncounted by law.

Inotiv’s escape incident exposes more than a security failure. It reveals a philosophical one: a science that measures success solely in outcomes, not in ethics.

Laboratories operate on what moral theorist Thomas Nagel calls “the view from nowhere” — the illusion of objectivity that erases empathy. Yet the data harvested from distress is not neutral; it is contaminated by moral cost.

The logic underpinning animal research echoes the colonial model: dominion disguised as discovery.

From vivisections in Victorian Europe to Cold-War bioweapon testing, knowledge has long advanced through asymmetry — one species, class, or race sacrificed for another’s “progress.”

As PETA documents, primates are still bred in overseas farms and imported by the tens of thousands into U.S. laboratories each year, often from Cambodia, Mauritius, and Indonesia. Many die before they ever reach an experiment.

This is not science in pursuit of healing; it is empire repackaged as research.

Technological alternatives now make the old logic obsolete.

Organs-on-chips, pioneered by Harvard’s Wyss Institute, replicate human physiology at the cellular level without live testing.

AI-driven predictive models by Insilico Medicine and DeepMind outperform traditional toxicology trials in accuracy and speed.

The argument that “animal testing saves lives” is no longer empirically defensible — it is economically convenient. Billions in contracts, patents, and grants sustain an ecosystem resistant to moral evolution.

Ethical stagnation, not scientific necessity, keeps cages full.

Researchers justify these practices through a form of moral compartmentalisation — empathy outsourced to regulation.

The National Institutes of Health requires only minimal welfare standards, which often mean “enrichment” rather than liberation.

Yet the footage released by Beagle Freedom Project in 2024 — showing animals pacing, mutilating, and collapsing from stress — demonstrates that compliance is not compassion.

To be ethical is not to follow the law; it is to exceed it. The Georgia escape became a metaphor: intelligence fleeing confinement.

Witnesses described the monkeys vanishing into the forest canopy — untraceable, ungoverned, untamed. For a brief moment, nature reclaimed narrative control.

Perhaps that is why the story faded so quickly; it disrupted our species’ favourite illusion — that the pursuit of knowledge justifies every cost.

Science loves answers. But ethics begins with questions.

The real frontier is not in laboratories but in moral design — integrating empathy as method, not obstacle.

Governments should phase out live testing through targeted funding of non-animal technologies, with a ten-year sunset clause similar to the EU’s Cosmetics Directive.

Investors, too, must demand Return on Integrity: innovation that honours life rather than exploits it.

A civilisation that tortures in pursuit of truth cannot call itself intelligent.

Because knowledge that costs cruelty corrodes its own credibility.

Because the measure of progress is not power over life but partnership with it.

Because when forty-three monkeys escape, it is not chaos — it is conscience, sprinting back toward the forest.

Burta Quarter — Afro-Canadian strategist and systems reformer at Why These Matter Media. Her essays explore ethics, governance, and design through the lens of power, empathy, and institutional evolution.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

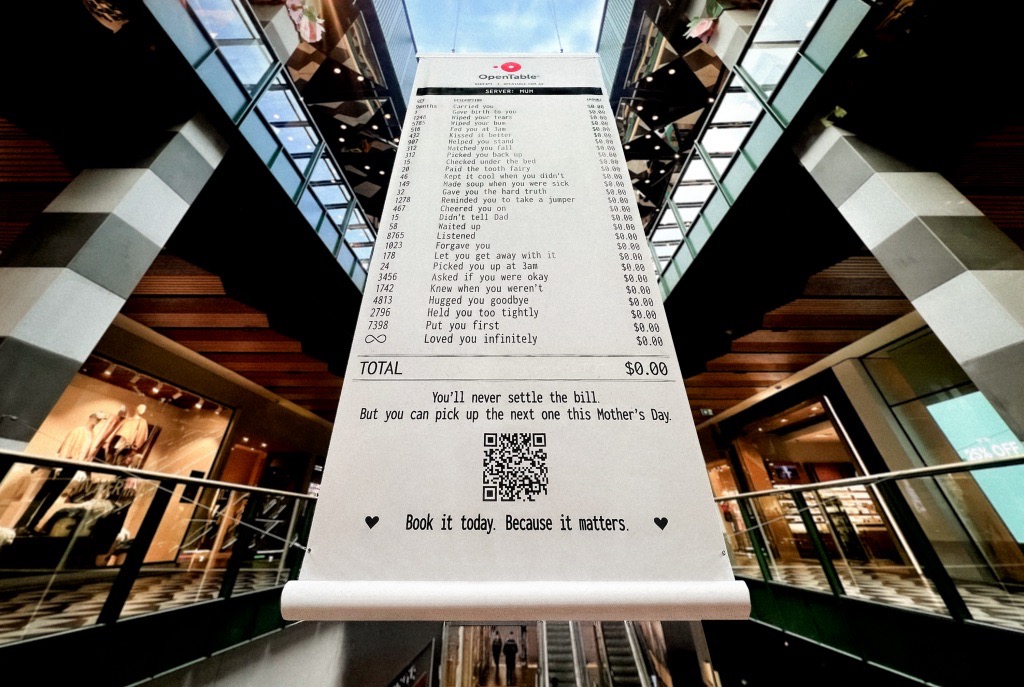

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.