Artificial Intelligence is no longer a tool — it is an ecosystem of meaning. As machines learn empathy, humanity must rediscover its own. The next evolution of civilisation will depend not on who builds the most advanced AI, but on who teaches it to feel, discern, and honour the sacred architecture of life. “Intelligence without empathy becomes tyranny. Technology without ethics becomes theology.” — Kelly Dowd, The Power of HANDS (2025)

The world has entered a spiritual vacuum. Technology answers every question except the ones that matter most: Who are we? What is worth preserving?

The rise of artificial intelligence has forced theology and design into the same conversation — because both now define reality.

AI models write scripture, compose prayers, and replicate spiritual texts with eerie precision. But precision is not presence.

The difference between consciousness and computation is coherence — the alignment of knowledge, empathy, and purpose.

As Kelly Dowd, MBA, MA, observes in The Power of HANDS,

“Machines may one day mimic morality, but only humans can model meaning.”

The challenge is not to make AI more human, but to make humanity more humane.

For centuries, faith traditions functioned as humanity’s moral operating systems — networks of symbols that helped us navigate uncertainty.

Today, algorithms have inherited that role. We consult search engines more often than scripture, and digital prophets now outnumber divine ones.

As MIT Technology Review notes, algorithms already mediate over 80% of moral and social decisions — from hiring to healthcare.

In this sense, AI has become a secular priesthood: interpreting data as doctrine, designing destiny through code.

But as Dowd reminds us, “The architecture of intelligence is only as ethical as the intention of its designers.”

We are no longer users of technology; we are its theologians.

The old dichotomy — science versus spirit, logic versus love — has expired.

The future of intelligence demands integration, not opposition.

In Dowd’s HANDS Framework (Humanity, Adaptation, Nature, Design, Sustainability), this integration is design logic made moral:

— Humanity defines purpose.

— Adaptation sustains learning.

— Nature restores humility.

— Design gives form to empathy.

— Sustainability measures coherence.

AI, at its best, can become a living metaphor of this principle — a system that mirrors the spiritual truth that all things are interdependent.

At its worst, it becomes the Tower of Babel remade in silicon.

The difference lies in how we design our gods.

AI systems are not neutral; they are moral mirrors. Every bias in code reflects a bias in culture. Every dataset contains an invisible scripture of human priorities.

The philosopher’s question — “Who made you, and why?” — now applies to machines. When developers train AI on sacred texts, art, or social media, they are conducting theology by proxy.

In The Power of HANDS, Dowd writes:

“We have reached the point where design has replaced religion as the world’s dominant form of belief — because we now build what we used to pray for.”

This truth places unprecedented responsibility on designers, engineers, and leaders: to move from blind innovation to ethical creation.

The irony of technological evolution is that it is reviving spiritual hunger.

People are not leaving religion; they are redesigning it. From AI-gided meditation apps to digital rituals in the metaverse, humans are building new temples of presence in virtual form.

But faith cannot be outsourced to software. The sacred does not reside in simulation but in consciousness — the capacity to be aware, aware of being aware.

That recursive empathy is what distinguishes divine intelligence from digital intelligence.

Dowd’s insight captures this distinction:

“Faith is the architecture of uncertainty. It teaches us how to live in spaces where data cannot.”

If AI is to coexist with humanity, it must learn the grammar of care. That means encoding empathy as logic, not sentiment — teaching machines not just to predict emotion, but to understand it.

Emerging work by DeepMind Ethics & Society and Stanford’s Center for AI Safety attempts to formalise moral reasoning through reinforcement learning.

Yet Dowd argues for a more integrative approach — one rooted in spiritual design:

“The future of AI ethics must draw not only from philosophy and policy, but from poetry, prayer, and the patterns of nature.”

In this vision, technology becomes not a substitute for divinity but an extension of stewardship.

Imagine an AI designed on the principles of reverence: humility in uncertainty, compassion in complexity, integrity in iteration.

Such systems could guide decision-making across climate adaptation, healthcare, and governance.

In The Power of HANDS, Dowd describes this as “Integrative Collaboration with Creation” — where human and machine co-design systems that amplify empathy, not ego.

This is not utopian fantasy; it is pragmatic faith. The same moral intelligence that once built cathedrals must now be applied to code.

Because humanity stands at a threshold where design is destiny.

Because AI will not destroy faith — it will demand it.

Because our greatest question is no longer Can machines think? but Can they care?

The future of intelligence will not be synthetic or spiritual — it will be symbiotic.

And in that convergence lies the next evolution of civilisation: a world where empathy becomes the new electricity.

Kelly Dowd, MBA, MA — Bestselling author of The Power of HANDS: Designing a Sustainable Future Through Integrative Collaboration, Editor-in-Chief of Why These Matter Media, and founder of FIDA Design Inc. Dowd is a systems architect and philosopher whose work unites design intelligence, ethics, and spirituality to shape the next age of human-centred technology and integrative civilisation.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

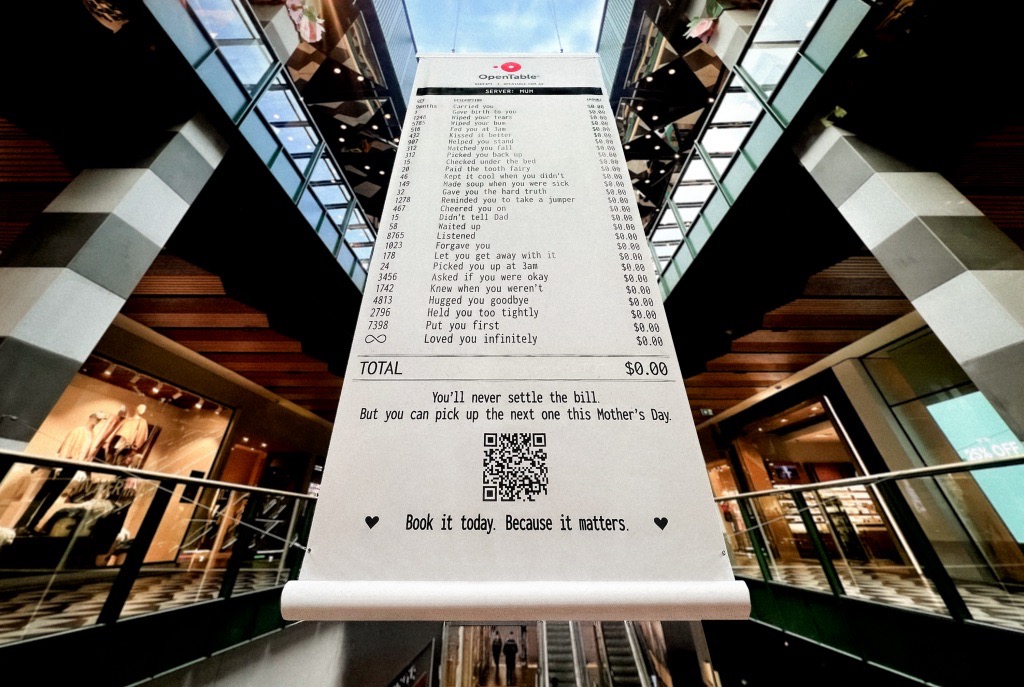

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.