The crisis in education is not a failure of funding—it is a design failure of philosophy. Around the world, intelligence has become political, teachers have become targets, and truth itself has become negotiable. The war on education is the quietest, most consequential conflict of our time.

In 2025, the world is witnessing a paradox: humanity’s greatest access to information coincides with its deepest distrust of knowledge.

Across democracies and dictatorships alike, intellectualism is being reframed as elitism. Experts are discredited, educators are attacked, and schools are recast as ideological battlegrounds.

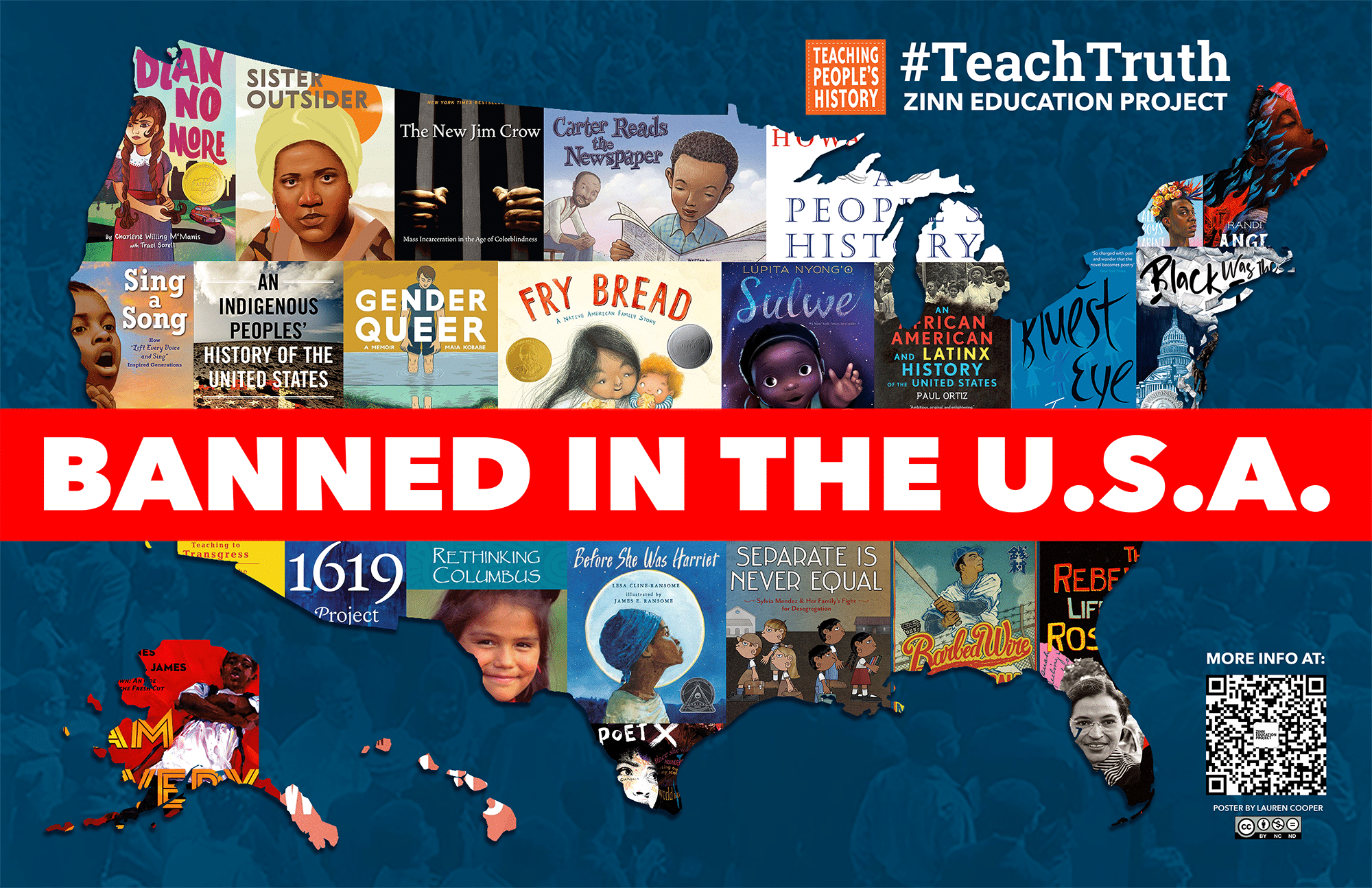

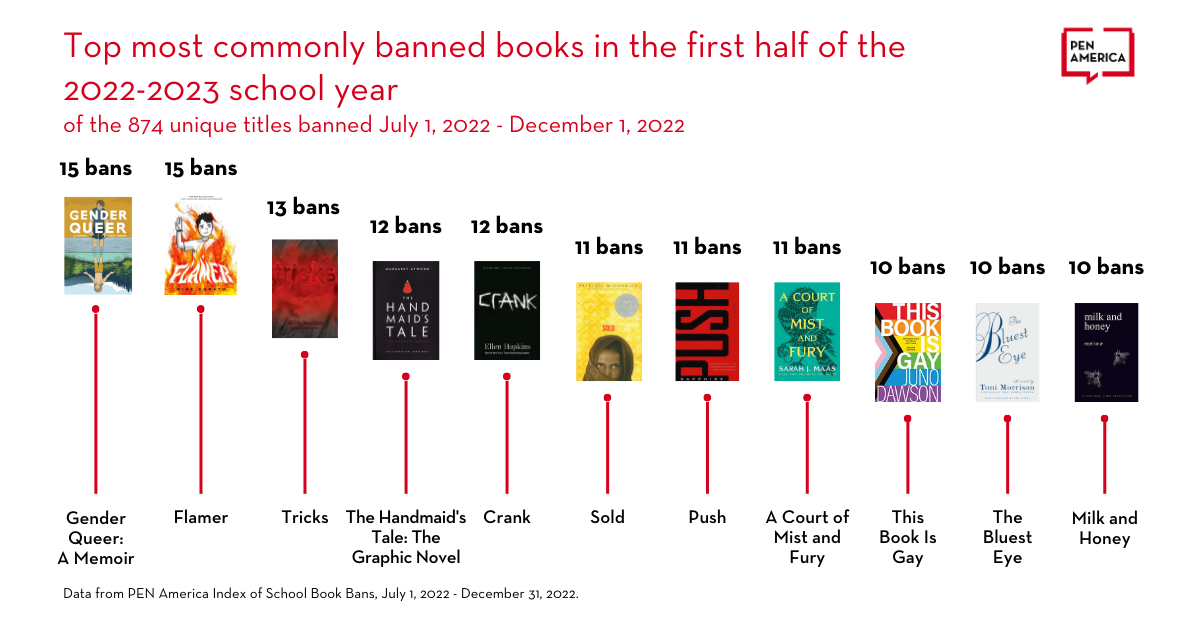

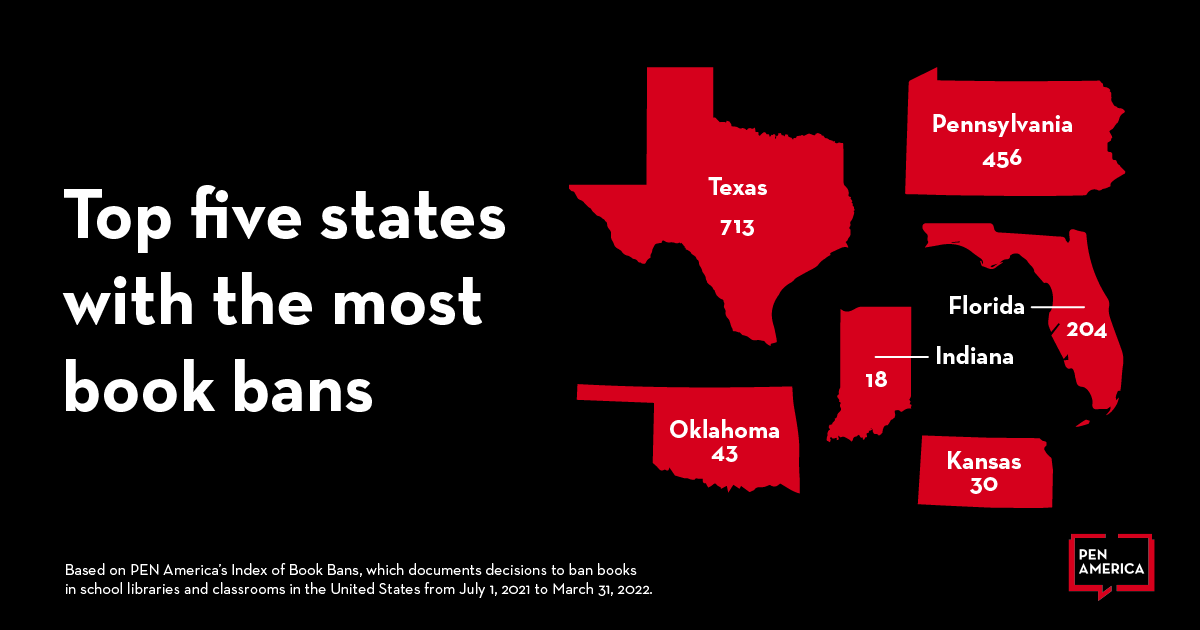

In the United States, book bans have surged across 40 states. In India, academic curricula have been politically sanitised. In Hungary and Florida alike, history itself is under revision.

This is not coincidence — it is coordination.

Populism has discovered its most potent enemy: the informed mind.

Education has never been neutral. It shapes power by shaping perception.

Historically, every empire — from Rome to Britain — maintained control through cultural curriculum.

What is new in this century is the scale of manipulation and the sophistication of the methods.

Social media, political propaganda, and algorithmic personalisation have merged into an invisible education system — teaching millions daily, without syllabus or scrutiny.

As The Economist reported, “The world’s largest school is now the internet, and its teachers are whoever wins the algorithm.”

When truth becomes optional, learning becomes ornamental.

The devaluation of teachers and public schools is not only ideological—it is financial.

Private education companies, testing corporations, and political think tanks now profit from chaos.

A 2025 OECD study found that education inequality has doubled in a decade, driven by a $300 billion global shadow market of unregulated digital tutoring platforms.

The free market has colonised the classroom.

Knowledge, once a public good, has been privatised into a subscription model.

Ignorance, ironically, is now the most lucrative product on Earth.

Educators, once the guardians of collective consciousness, have become scapegoats for cultural anxiety.

In Brazil, teachers have been assaulted for teaching gender equality. In the U.S., educators face lawsuits for discussing race or climate science. In Afghanistan and Iran, teaching girls remains an act of rebellion punishable by imprisonment or death.

The result is moral erosion disguised as curriculum reform.

Societies claim to protect children from discomfort, when in truth they are protecting ideology from scrutiny.

Education is not being reformed—it is being rewritten.

In the attention economy, distraction is design.

Students now compete not with ignorance but with infinite noise.

TikTok, YouTube, and gamified learning platforms promise “engagement,” but as MIT’s Media Lab found, cognitive retention drops by 60% in attention-fragmented environments.

The human mind, evolved for continuity, is being trained for chaos.

We are producing generations who can absorb everything and understand nothing — intellectually stimulated, spiritually starved.

Women and girls bear the heaviest cost of this crisis.

In Sudan, schoolgirls are abducted amid civil unrest.

In Afghanistan, the Taliban’s ban on secondary education for women continues despite global condemnation.

In Pakistan, Malala Yousafzai’s call for global investment in girls’ education remains underfunded by 70%.

Education is freedom’s foundation — and the first casualty of fear.

Wherever patriarchal systems persist, intelligence becomes subversion.

Artificial intelligence could have democratised education; instead, it risks replicating inequality.

AI tutors, powered by OpenAI and Google DeepMind systems, promise personalised learning. Yet, without ethical frameworks, they mirror existing cultural bias.

As Why These Matter’s HANDS Framework suggests, technology without Humanity and Design becomes disembodied intelligence — efficient but indifferent.

AI must be trained not only on datasets, but on empathy.

Otherwise, we risk building machines that outlearn us morally.

The war on education cannot be won by teachers alone.

It requires architects, technologists, parents, philosophers, and policymakers to treat learning as infrastructure, not ideology.

We must design schools as sanctuaries of doubt, not factories of certainty.

Finland’s phenomenon-based learning model proves that curiosity, not conformity, yields innovation.

Rwanda’s post-genocide education reforms show how teaching empathy rebuilds fractured nations.

Knowledge must evolve from memorisation to moral imagination.

Because every dictatorship begins with the death of curiosity.

Because intelligence without ethics becomes exploitation.

Because teaching is the only profession that creates all others.

The war on education is not fought with weapons—it is fought with silence, distraction, and distortion.

To defend learning is to defend humanity itself.

Burta Quarter — Writer, strategist, and education ethicist at Why These Matter Media. Her work explores how culture, governance, and technology intersect to define the moral architecture of intelligence.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

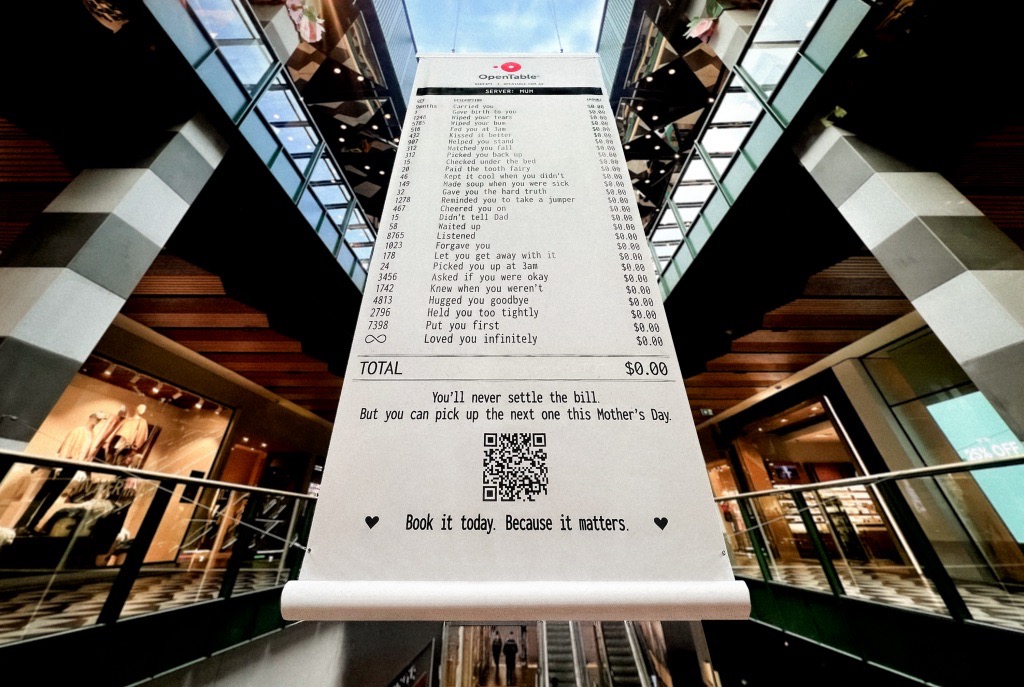

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.