Artificial intelligence is not a speculative concept; it is a transformative force already reshaping industries, infrastructure, and human capability. Yet the financial behaviour surrounding it reveals a familiar and recurring dislocation between technological reality and market expectation. The rapid valuation ascent of companies such as NVIDIA signals not only confidence in AI’s future, but a compression of that future into present-day pricing. This compression introduces structural tension, where capital markets begin to reward anticipated outcomes long before underlying systems, adoption cycles, and revenue models have fully matured. As investment concentrates and narratives accelerate, the question is no longer whether AI will change the world, but whether markets have mispriced the timeline of that change. This editorial examines the widening gap between innovation and valuation, arguing that the risk is not technological failure, but financial overextension built on premature certainty.

Every technological revolution carries with it a dual reality, in which genuine transformation coexists with speculative excess, and where the distinction between long-term potential and short-term valuation becomes increasingly difficult to maintain. Artificial intelligence is no exception to this pattern, as its rapid advancement has generated both legitimate breakthroughs and market behaviour that reflects an accelerated projection of future outcomes into present-day pricing. The question that emerges is not whether AI will fundamentally alter economic and social systems, but whether the financial structures surrounding it are aligned with the pace at which those changes can realistically occur.

The current AI landscape is characterised by a concentration of capital and attention around a relatively small number of companies that are perceived to be central to the development and deployment of advanced systems. Among these, NVIDIA has become emblematic of the infrastructure layer that underpins AI, as its hardware is essential for training and running large-scale models. The company’s valuation growth reflects not only its current performance but the expectation that demand for its products will continue to expand at a rate that justifies sustained dominance within this emerging sector.

This expectation is rooted in a broader narrative that positions AI as a general-purpose technology capable of transforming multiple industries simultaneously, thereby creating a multiplier effect on economic activity. While this narrative is supported by observable advancements, it also introduces a level of abstraction that allows future potential to be priced into present valuations without a clear timeline for realisation. The result is a market environment in which valuation is driven as much by anticipation as by measurable output.

Historical parallels provide a useful framework for understanding this dynamic, particularly the dot-com era, during which the transformative potential of the internet was correctly identified but significantly mispriced in terms of timing and execution. Companies were valued based on projected dominance within a future digital economy, yet many lacked the infrastructure, user base, or revenue models necessary to sustain those valuations in the short term. The subsequent correction did not invalidate the underlying technology but recalibrated the relationship between expectation and reality.

The distinction between infrastructure and application layers is critical in this context, as it highlights the different rates at which value is realised within a technological ecosystem. Infrastructure providers, such as semiconductor companies and cloud platforms, often capture early value due to their role in enabling development, while application-level innovations may take longer to mature as they require integration into existing workflows, user adoption, and regulatory alignment. This temporal gap creates a situation in which the financial market may overemphasise immediate returns from infrastructure while underestimating the complexity of translating that infrastructure into widespread, profitable applications.

Capital concentration further amplifies this effect, as significant investment flows into a limited set of companies that are perceived as leaders, reinforcing their market position while increasing systemic exposure to their performance. This concentration is not inherently problematic, as it can accelerate development and innovation, but it does create a dependency on a narrow segment of the market to sustain broader expectations. If these companies fail to meet projected growth trajectories, the impact extends beyond individual valuations to affect the overall perception of the sector.

Market psychology plays a central role in sustaining this dynamic, as investors respond not only to data but to narratives that frame AI as an inevitable and immediate driver of economic expansion. These narratives are reinforced by visible successes, such as improvements in language models, image generation, and automation, which provide tangible evidence of progress while also encouraging extrapolation beyond current capabilities. The tendency to project linear growth from non-linear developments contributes to a misalignment between what is technologically possible and what is economically realised within a given timeframe.

The integration of AI into enterprise systems introduces additional layers of complexity that are often underrepresented in market valuations, as organisations must navigate challenges related to data integration, workflow adaptation, security, and regulatory compliance before fully realising the benefits of these technologies. These processes are inherently gradual, requiring iterative implementation and adjustment rather than immediate transformation. The gap between technological capability and organisational readiness becomes a critical factor in determining the pace of adoption.

Regulatory considerations also influence the trajectory of AI development, as governments and institutions seek to establish frameworks that address ethical, legal, and societal implications. These frameworks, while necessary, introduce constraints that can affect the speed at which AI is deployed, particularly in sensitive sectors such as healthcare, finance, and public administration. The interaction between innovation and regulation adds another dimension to the timeline of value realisation, further complicating the relationship between market expectations and practical outcomes.

The question of whether the current AI market represents a bubble is therefore not a binary assessment but a matter of degree, as it involves evaluating the extent to which valuations are aligned with realistic timelines for adoption and revenue generation. It is possible for a technology to be both transformative and overvalued simultaneously, as the validity of the underlying innovation does not guarantee that the financial structures built around it are appropriately calibrated.

Understanding the tension between technological potential and market valuation is essential for navigating the AI landscape, as it informs decisions related to investment, strategy, and policy. For investors, it highlights the importance of distinguishing between long-term value and short-term pricing, recognising that overvaluation can coexist with genuine innovation and that corrections are a natural part of the development cycle. For companies, it underscores the need to align expectations with execution, ensuring that growth strategies are grounded in realistic assessments of adoption and integration.

For the broader economy, the implications extend to systemic stability, as concentrated exposure to a single sector or set of companies increases vulnerability to shifts in perception and performance. A recalibration of valuations, while potentially disruptive in the short term, can contribute to a more sustainable alignment between technological development and economic impact, enabling the sector to mature without the distortions introduced by excessive speculation.

Artificial intelligence will continue to evolve and reshape industries, but the timeline of that transformation will be determined by a complex interplay of technological capability, organisational readiness, and regulatory context. Recognising this complexity is essential for moving beyond simplified narratives and engaging with the realities of how innovation unfolds within economic systems.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

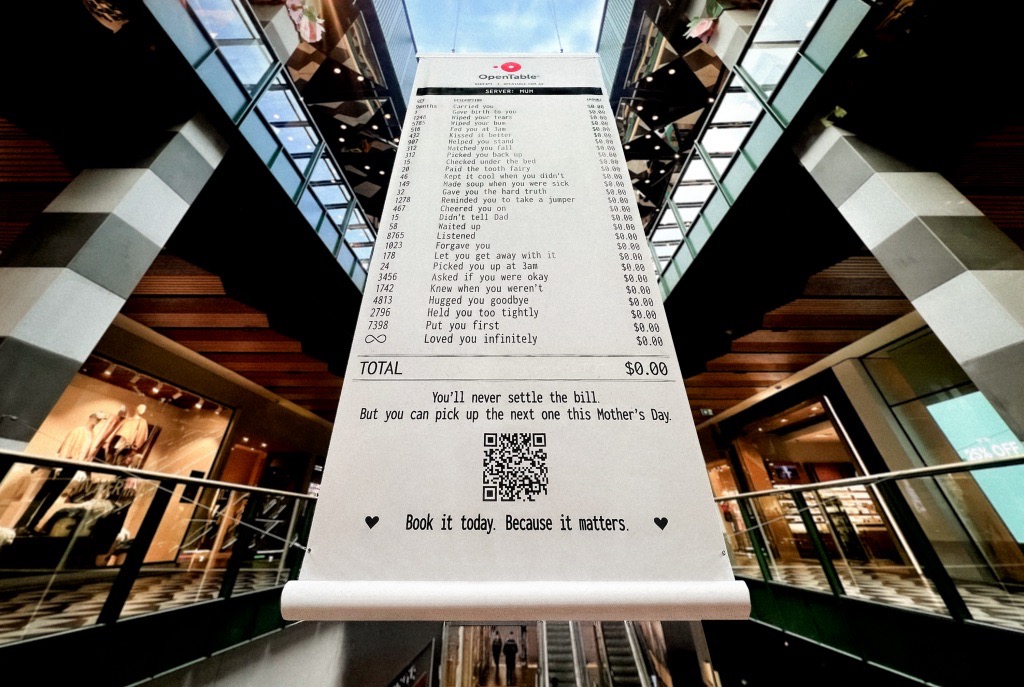

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.