The global economy is changing fast. Courtroom ruling that reduced his killing to “just murder” may comfort statute books. But markets are not ruled by statute—they are ruled by sentiment. And sentiment is fragile.

When Luigi Mangione pulled the trigger, he didn’t just end a life. He sent ripples through a system. Brian Thompson wasn’t just a CEO—he was the public face of UnitedHealthcare, a corporation woven into the very fabric of American healthcare and global financial markets.

The courtroom ruling that reduced his killing to “just murder” may comfort statute books. But markets are not ruled by statute—they are ruled by sentiment. And sentiment is fragile. When CEOs become symbols of public anger, the economy trembles. Investors don’t just weigh quarterly earnings; they weigh stability.

• The assassination of a CEO signals systemic vulnerability.

• The failure to call it terrorism signals that the state does not recognize corporate leadership as a national security issue.

• The gap between law and perception becomes a fissure markets cannot price.

The Mangione case matters because it tells investors: the law will not classify corporate violence as systemic terror. Which means risk is borne not by courts but by markets.

The United States remains the reference point for global corporate law. When a New York judge rules that the killing of a CEO is not terrorism, it sets an international tone.

• In Europe, where populism rises and farmers blockade roads, corporate leaders may face similar threats.

• In Latin America, where cartels and corporations blur, violence against executives is already a shadow economy.

• In Asia, where conglomerates wield state-level power, leaders may find themselves more exposed than ever.

If America refuses to frame corporate-directed violence as terror, others may follow. The precedent travels faster than the ruling itself.

Layer artificial intelligence on top, and the threat compounds.

• Deepfakes can place CEOs in fabricated scandals.

• Algorithms can amplify calls for violence faster than states can respond.

• AI-generated manifestos can radicalize thousands in hours.

• Machine-driven targeting could identify not just CEOs but their families, their schedules, their vulnerabilities.

Without international legal frameworks, AI becomes the silent accelerant. What Mangione did alone with a handgun could, in the future, be multiplied by machine intelligence into coordinated, simultaneous acts.

Yet courts remain trapped in 20th-century categories. Terrorism requires groups, networks, manifestos. The lone man with AI? Invisible to statute.

The Mangione trial is not only about violence against corporations—it is about the future of rights.

• Speech rights will be tested when AI produces inflammatory content at scale.

• Privacy rights will vanish as corporations surveil to protect themselves.

• Labor rights may shrink as executives retreat behind walls, more accountable to shareholders than to employees.

• Consumer rights could erode as corporations argue that public anger justifies more control, not less.

We may enter a paradoxical era: the more corporations are targeted, the more rights ordinary people lose.

Courts lag. Markets lead. That is the brutal truth.

• Judges dismiss terrorism charges, bound by precedent.

• Markets slash valuations, bound by perception.

• Corporations build higher fortresses, bound by fear.

In the gap between the law’s slow definitions and the market’s instant reactions lies a new instability—one that could rattle not just companies, but nations.

The Mangione case matters because it is a trial in New York with consequences in Shanghai, Paris, São Paulo, and Lagos. It is not about one man or one CEO. It is about the architecture of rights and risks in a world where corporations are symbols, violence is politicized, and AI accelerates everything.

The global economy is already fragile. Trust is already thin. If law continues to lag, markets will decide. And when markets decide, rights often erode.

Because in the end, the question is not whether Mangione will be convicted. The question is whether our systems—legal, corporate, and global—can survive the next collision of rage, rights, and reality.

Kelly Dowd, MBA, MA is a Nigerian–American designer and systems architect, International Bestselling author of The Power of HANDS: Designing a Sustainable Future Through Integrative Collaboration, Editor-in-Chief of Why These Matter Media, and founder of FIDA Design Inc. Dowd's work unites design intelligence, ethics, and spirituality to shape the next age of human-centred technology and integrative civilisation. He created the HANDS Framework (Humanity, Adaptation, Nature, Design, Sustainability) and the Four Ps (People, Planet, Pragmatism, Profit), advancing a pragmatic ethics of innovation: Return on Integrity.

Recent scientific attention surrounding compounds in extra virgin olive oil and their potential relationship to Alzheimer’s disease has reignited global interest in preventative brain health. Research involving polyphenols such as oleocanthal suggests certain compounds found in olive oil may assist the brain’s natural clearance systems associated with toxic proteins linked to neurodegeneration. While social media headlines often exaggerate findings, the deeper story is profoundly important: humanity is entering an era where cognitive decline may become one of the defining economic, medical, and existential crises of the 21st century. The future battle over ageing is no longer simply about living longer. It is about preserving consciousness itself.

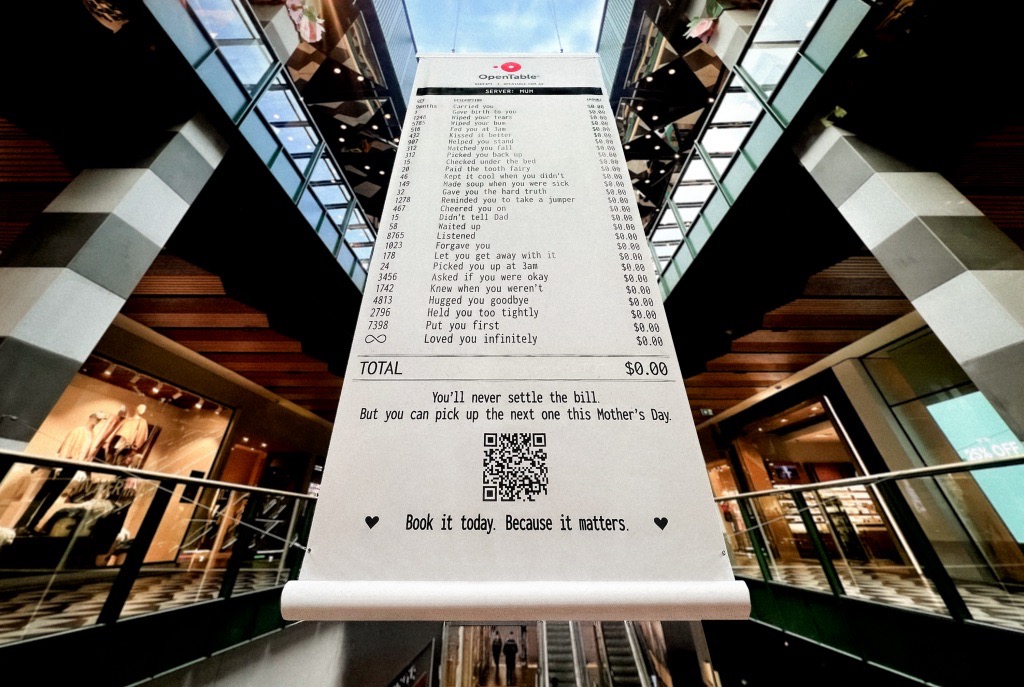

A Mother’s Day campaign by OpenTable recently circulated online featuring a mock restaurant receipt listing thousands of invisible maternal acts — “carried you,” “wiped your tears,” “waited up,” “loved you infinitely” — all priced at $0.00. The advertisement was emotionally devastating because it exposed a truth modern economies systematically ignore: the most civilisation-sustaining labour in human history has largely remained unpaid, feminised, invisible, and emotionally expected. The campaign was not simply clever marketing. It revealed how contemporary capitalism increasingly monetises emotional recognition precisely because society has failed to structurally value care itself.

Meryl Streep being named the greatest actress of the 21st century is less surprising than what the announcement reveals about Hollywood itself. Streep represents a fading era of performance rooted in theatrical discipline, literary depth, emotional intelligence, and institutional seriousness. At a time when entertainment ecosystems increasingly prioritise franchise scalability, algorithmic engagement, and short-form attention extraction, her career stands as evidence of what cinema once demanded — and what modern systems may be quietly abandoning.